Google’s decision to block the Truth Social app’s launch on the Play Store over content moderation issues raises the question as to why Apple hasn’t taken similar action over the iOS version of the app that’s been live on the App Store since February. According to a report by Axios, Google found numerous posts that violated its Play Store content policies, blocking the app’s path to go live on its platform. But some of these same types of posts appear to be available on the iOS app, TechCrunch found.

This could trigger a re-review of Truth Social’s iOS app at some point, as both Apple’s and Google’s policies are largely aligned in terms of how apps with user-generated content must moderate their content.

Axios this week first reported Google’s decision to block the distribution of the Truth Social app on its platform, following an interview given by the app’s CEO, Devin Nunes. The former Congressman and member of Trump’s transition team, now social media CEO, suggested that the holdup with the app’s Android release was on Google’s side, saying, “We’re waiting on them to approve us, and I don’t know what’s taking so long.”

But this was a mischaracterization of the situation, Google said. After Google reviewed Truth Social’s latest submission to the Play Store, it found multiple policy violations, which it informed Truth Social about on August 19. Google also informed Truth Social as to how those problems could be addressed in order to gain entry into the Play Store, the company noted.

“Last week, Truth Social wrote back acknowledging our feedback and saying that they are working on addressing these issues,” a Google spokesperson shared in a statement. This communication between the parties was a week ahead of Nunes’s interview where he implied the ball was now in Google’s court. (The subtext to his comments, of course, was that conservative media was being censored by Big Tech once again.)

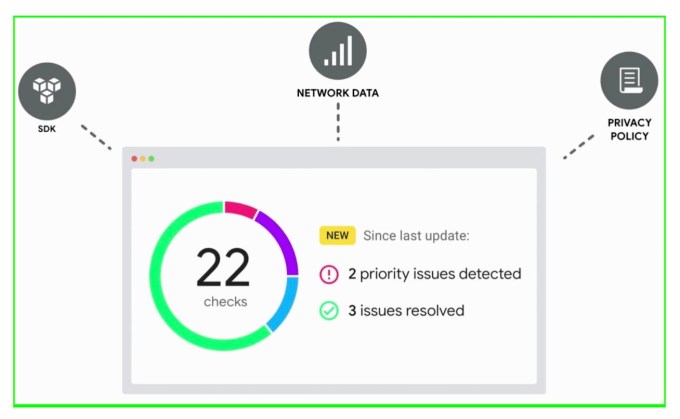

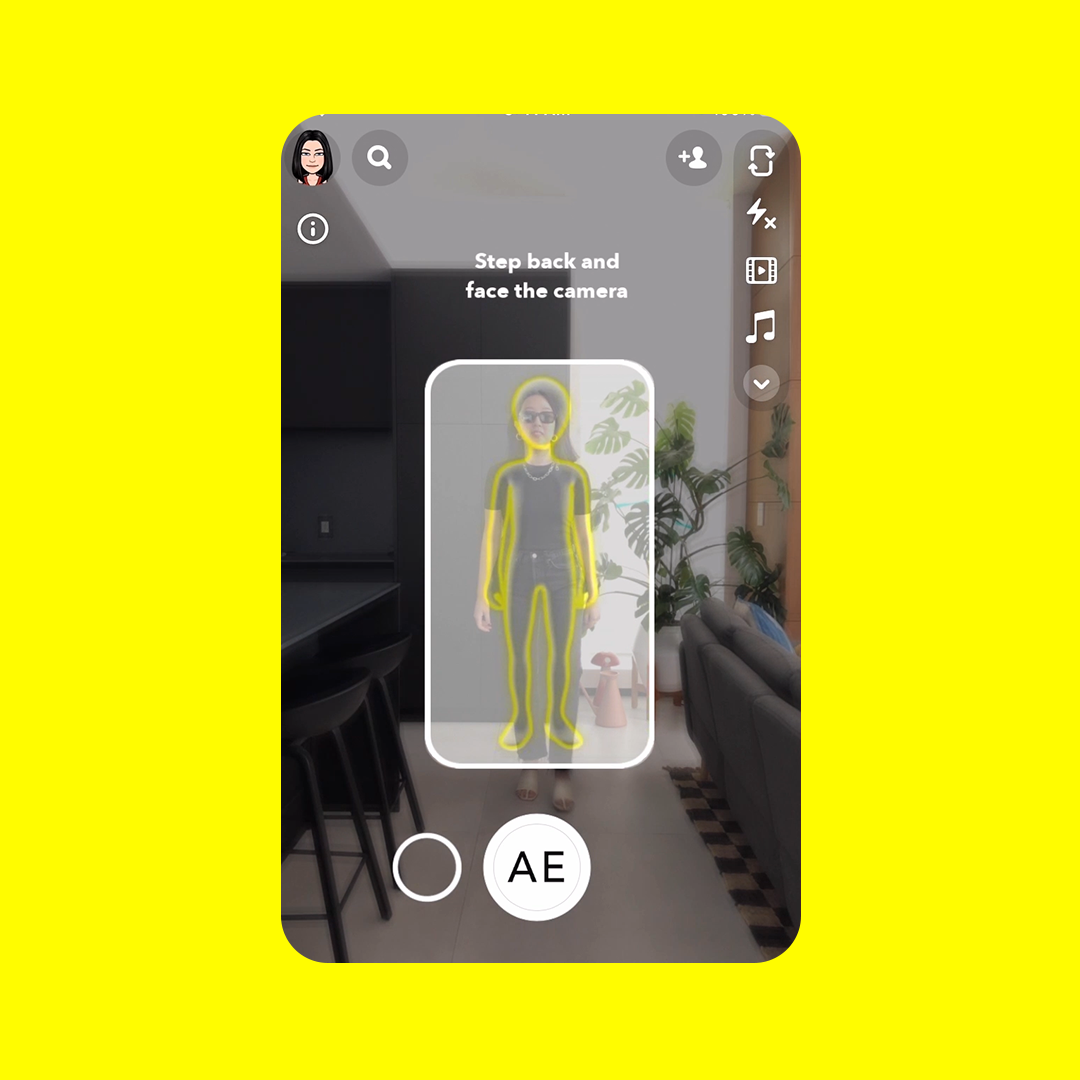

The issue at hand here stems from Google’s policy for apps that feature user-generated content, or UGC. According to this policy, apps of this nature must implement “robust, effective and ongoing UGC moderation, as it reasonable and consistent with the type of UGC hosted by the app.” Truth Social’s moderation, however, is not robust. The company has publicly said it relies on an automated A.I. moderation system, Hive, which is used to detect and censor content that violates its own policies. On its website, Truth Social notes that human moderators “oversee” the moderation process, suggesting that it uses an industry-standard blend of AI and human moderation. (Of note, the app store intelligence firm Apptopia told TechCrunch the Truth Social mobile app is not using the Hive AI. But it says the implementation could be server-side, which would be beyond the scope of what it can see.)

Truth Social’s use of A.I.-powered moderation doesn’t necessarily mean the system is sufficient to bring it into compliance with Google’s own policies. The quality of AI detection systems varies and those systems ultimately enforce a set of rules that a company itself decides to implement. According to Google, several Truth Social posts it encountered contained physical threats and incitements to violence — areas the Play Store policy prohibits.

Image Credits: Truth Social’s Play Store listing

We understand Google specifically pointed to the language in its User Generated Content policy and Inappropriate Content policy when making its determination about Truth Social. These policies include the following requirements:

Apps that contain or feature UGC must:

- require that users accept the app’s terms of use and/or user policy before users can create or upload UGC;

- define objectionable content and behaviors (in a way that complies with Play’s Developer Program Policies), and prohibit them in the app’s terms of use or user policies;

- implement robust, effective and ongoing UGC moderation, as is reasonable and consistent with the type of UGC hosted by the app

and

- Hate Speech – We don’t allow apps that promote violence, or incite hatred against individuals or groups based on race or ethnic origin, religion, disability, age, nationality, veteran status, sexual orientation, gender, gender identity, caste, immigration status, or any other characteristic that is associated with systemic discrimination or marginalization.

- Violence – We don’t allow apps that depict or facilitate gratuitous violence or other dangerous activities.

- Terrorist Content – We don’t allow apps with content related to terrorism, such as content that promotes terrorist acts, incites violence, or celebrates terrorist attacks.

And while users may be able to initially post such content — no system is perfect — an app with user-generated content like Truth Social (or Facebook or Twitter, for that matter) would need to be able to take down those posts in a timely fashion in order to be considered in compliance.

In the interim, the Truth Social app is not technically “banned” from Google Play — in fact, Truth Social is still listed for pre-order today, as Nunes also pointed out. It could still make changes to come into compliance, or it could choose another means of distribution.

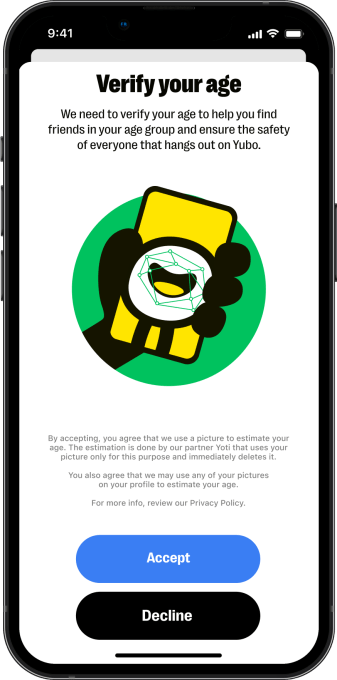

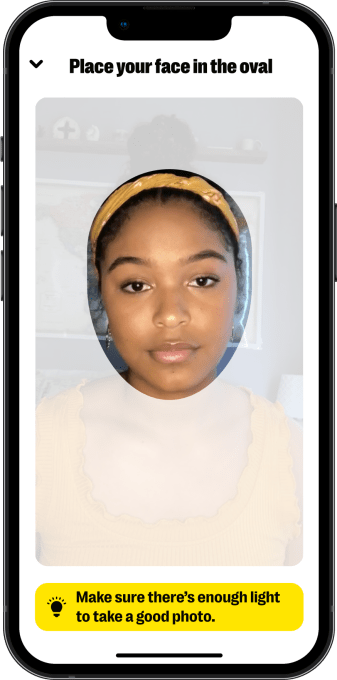

While Truth Social decides its course for Android, an examination of posts on Truth Social’s iOS version revealed a range of anti-semitic content, including Holocaust denial, as well as posts promoting the hanging of public officials and others (including those in the LGBTQ+ community), posts advocating for civil war, posts in support of white supremacy, and many other categories that would seem to be in violation of Apple’s own policies around objectionable content and UGC apps. Few were behind a moderation screen.

It’s not clear why Apple has not taken action against Truth Social, as the company hasn’t commented. One possibility is that, at the time of Truth Social’s original submission to Apple’s App Store, the brand-new app had very little content for an App Review team to parse, so didn’t have any violative content to flag. Truth Social does use content filtering screens on iOS to hide some posts behind a click-through warning, but TechCrunch found the use of those screens to be haphazard. While the content screens obscured some posts that appeared to break the app’s rules, the screens also obscured many posts that did not contain objectionable content.

Assuming Apple takes no action, Truth Social would not be the first app to grow out of the pro-Trump online ecosystem and find a home on the App Store. A number of other apps designed to lure the political right with lofty promises about an absence of censorship have also obtained a green light from Apple.

Social networks Gettr and Parler and video sharing app Rumble all court roughly the same audience with similar claims of “hands off” moderation and are available for download on the App Store. Gettr and Rumble are both available on the Google Play Store, but Google removed Parler in January 2021 for inciting violence related to the Capitol attack and has not reinstated it since.

All three apps have ties to Trump. Gettr was created by former Trump advisor Jason Miller, while Parler launched with the financial blessing of major Trump donor Rebekah Mercer, who took a more active role in steering the company after the January 6 attack on the U.S. Capitol. Late last year, Rumble struck a content deal with former President Trump’s media company, Trump Media & Technology Group (TMTG), to provide video content for Truth Social.

Many social networks were implicated in the Jan. 6 attack — both mainstream social networks and apps explicitly catering to Trump supporters. On Facebook, election conspiracy theorists flocked to popular groups and organized openly around hashtags including #RiggedElection and #ElectionFraud. Parler users featured prominently among the rioters who rushed into the U.S. Capitol, and Gizmodo identified some of those users through GPS metadata attached to their video posts

Today, Truth Social is a haven for political groups and individuals that were ousted from mainstream platforms over concerns that they might incite violence. Former President Trump, who founded the app, is the most prominent among deplatformed figure to set up shop there, but Truth Social also offers a refuge to QAnon, a cult-like political conspiracy theory that has been explicitly barred from mainstream social networks like Twitter, YouTube and Facebook due to its association with acts of violence.

Over the last few years alone, that includes a California father who said he shot his two children with a speargun due to his belief in QAnon delusions, a New York man who killed a mob boss and appeared with a “Q” written on his palm in court and various incidents of domestic terrorism that preceded the Capitol attack. In late 2020, Facebook and YouTube both tightened their platform rules to clean up QAnon content after years of allowing it to flourish. In January 2021, Twitter alone cracked down on a network of more than 70,000 accounts sharing QAnon-related content, with other social networks following suit and taking the threat seriously in light of the Capitol attack.

A report released this week by media watchdog NewsGuard details how the QAnon movement is alive and well on Truth Social, where a number of verified accounts continue to promote the conspiracy theory. Former President Trump, Truth Social CEO and former House representative Devin Nunes and Patrick Orlando, CEO of Truth Social’s financial backer Digital World Acquisition Corporation (DWAC) have all promoted QAnon content in recent months.

Earlier this week, former President Trump launched a blitz of posts explicitly promoting QAnon, openly citing the conspiracy theory linked to violence and domestic terrorism rather than relying on coded language to speak to its supporters as he has in the past. That escalation paired with the ongoing federal investigation into Trump’s alleged mishandling of high stakes classified information — a situation that’s already inspired real-world violence — raises the stakes on a social app where the former president is able to openly communicate to his followers in real-time.

That Google would take a preemptive action to keep Truth Social from the Play Store while Apple is, so far, allowing it to operate is an interesting shift in the two tech giant’s policies over app store moderation and policing. Historically, Apple has taken a heavier hand in App Store moderation — culling apps that weren’t up to standards, poorly designed, too adult, too spammy, or even just operating in a gray area that Apple later decides now needs enforcement. Why Apple is hands-off in this particular instance isn’t clear, but the company has come under intense federal scrutiny in recent months over its interventionist approach to the lucrative app marketplace.

: Apple adds a 5th American Siri voice with filename ‘Quinn’

: Apple adds a 5th American Siri voice with filename ‘Quinn’